Invited Speakers (alphabetical order)

Dmitry Berenson

Talk title: Two routes toward long-horizon deformable object manipulation

Bio: Dmitry Berenson received a B.S. in Electrical and Computer Engineering from Cornell University in 2005, where he started his robotics work in Hod Lipson's lab. He went on to graduate from the Ph.D. program at the Robotics Institute at Carnegie Mellon University (CMU) in 2011, where his advisors were Siddhartha Srinivasa and James Kuffner. While at CMU, Dmitry Berenson worked in the Personal Robotics Lab and completed interships at the Digital Human Research Center in Japan, Intel Labs in Pittsburgh, and LAAS-CNRS in France. In 2012 he completed a post-doc at UC Berkeley working with Ken Goldberg and Pieter Abbeel. Dmitry Berenson was an Assistant Professor at WPI 2012-2016. He started as faculty at the University of Michigan in 2016. His current research focuses on learning and motion planning for manipulation. Dmitry Berenson has received the IEEE RAS Early Career Award and the NSF CAREER award.

Chelsea Finn

Talk title: Learning Long-Horizon Bi-Manual Tasks involving Deformable Object Manipulation

Bio: Chelsea Finn is an Assistant Professor in Computer Science and Electrical Engineering at Stanford University. Her research interests lie in the capability of robots and other agents to develop broadly intelligent behavior through learning and interaction. To this end, her work has pioneered end-to-end deep learning methods for vision-based robotic manipulation, meta-learning algorithms for few-shot learning, and approaches for scaling robot learning to broad datasets. Her research has been recognized by awards such as the Sloan Fellowship, the IEEE RAS Early Academic Career Award, and the ACM doctoral dissertation award, and has been covered by various media outlets including the New York Times, Wired, and Bloomberg. Prior to Stanford, she received her Bachelor's degree in Electrical Engineering and Computer Science at MIT and her PhD in Computer Science at UC Berkeley.

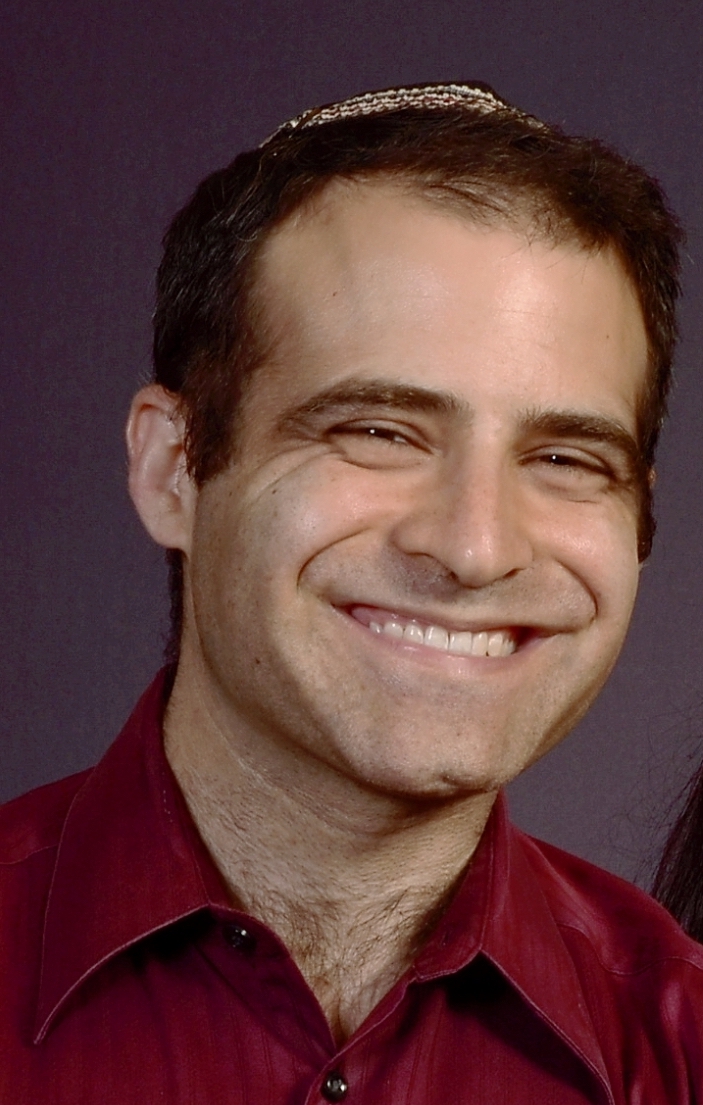

David Held

Talk title: Spatially-aware Robot Learning for Deformable Object Manipulation

Bio: David Held is an Associate Professor at Carnegie Mellon University in the Robotics Institute and is the director of the RPAD lab: Robots Perceiving And Doing. His research focuses on perceptual robot learning, i.e. developing new methods at the intersection of robot perception and planning for robots to learn to interact with novel, perceptually challenging, and deformable objects. David has applied these ideas to robot manipulation and autonomous driving. Prior to coming to CMU, David was a post-doctoral researcher at U.C. Berkeley, and he completed his Ph.D. in Computer Science at Stanford University. David also has a B.S. and M.S. in Mechanical Engineering at MIT. David is a recipient of the Google Faculty Research Award in 2017 and the NSF CAREER Award in 2021.

David Hsu

Provost's Chair Professor

National University of Singapore, Singapore

Personal website

Talk title: Differentiable Particles for General-Purpose Deformable Object Manipulation

Bio: David Hsu is a professor of computer science and the Director of Smart Systems Institute at the National University of Singapore (NUS). He is an IEEE Fellow.

His research lies in the intersection of robotics and AI. In recent years, he has been working on robot planning and learning under uncertainty for human-centered robots. His work won multiple international awards, including, most recently, Test of Time Award at Robotics: Science & Systems (RSS) in 2021 and IJCAI-JAIR Best Paper Prize in 2022. He has chaired or co-chaired several international robotics conferences, including WAFR 2010, RSS 2015, ICRA 2016, and CoRL 2021. He served on the editorial boards of Journal of Artificial Intelligence Research and International Journal of Robotics Research. He is currently an Editor of IEEE Transactions on Robotics.

Jeff Ichnowski

Talk title: Deformable Manipulator for Deformable Manipulation

Bio: Jeff Ichnowski is an assistant professor at Carnegie Mellon University's Robotics Institute. He was a postdoc at UC Berkeley's Sky Computing/RISE lab, AUTOLAB, and BAIR. Before returning to academia, he was the principal architect at SuccessFactors, Inc., one of the world's largest cloud-based software-as-a-service companies. His research explores robot algorithms and systems for high-speed motion, task, and manipulation planning, using cloud-based high-performance computing, optimization, and deep learning.

Gonzalo Lopez

Talk title: Multi-scale analysis for shape control of texture-less objects

Bio: Gonzalo Lopez-Nicolas is currently a Professor with Universidad de Zaragoza and Aragon Institute for Engineering Research (I3A). His current research interests include shape control, visual control, multi-robot systems, and the application of computer vision to robotics.

Michael Yip

Talk title: Deformable Manipulation for Autonomous Surgical Robots

Bio: Michael Yip, Ph.D., is an Associate Professor at the University of California San Diego and the Director of Advanced Robotics and Controls Lab (ARClab) at UCSD. His research group works at the intersection of medical robotics, machine learning, and computer vision, with applications towards robotic surgery, physical human-robot interaction, autonomous driving, and search and rescue. His research group have won numerous awards at robotics and AI venues. Dr. Yip was previously a Research Associate with Disney Research, a Visiting Professor at Stanford University, and a Visiting Professor with Amazon Robotics.

Organizers

- Michael C. Welle, KTH Royal Institute of Technology, Sweden

- Martina Lippi, Roma Tre University, Italy

- Fangyi Zhang, Queensland University of Technology (QUT), Australia

- Lawrence Yunliang Chen, University of California, Berkeley, USA

Co-Organizers

- Alberta Longhini, KTH Royal Institute of Technology, Sweden

- Danica Kragic, KTH Royal Institute of Technology, Sweden

- Daniel Seita, University of Southern California, USA

- David Held, Carnegie Mellon University, USA

- Peter Corke, Queensland University of Technology (QUT), Australia